Why do I say facial recognition wins? 3 key reasons.

- It is the only human readable biometric modality–which turns out is incredibly important to humans who buy and use biometric systems

- Ubiquity of visible light cameras

- The perfect equilibrium of security and convenience

Allow me to explain.

A brief background of biometrics

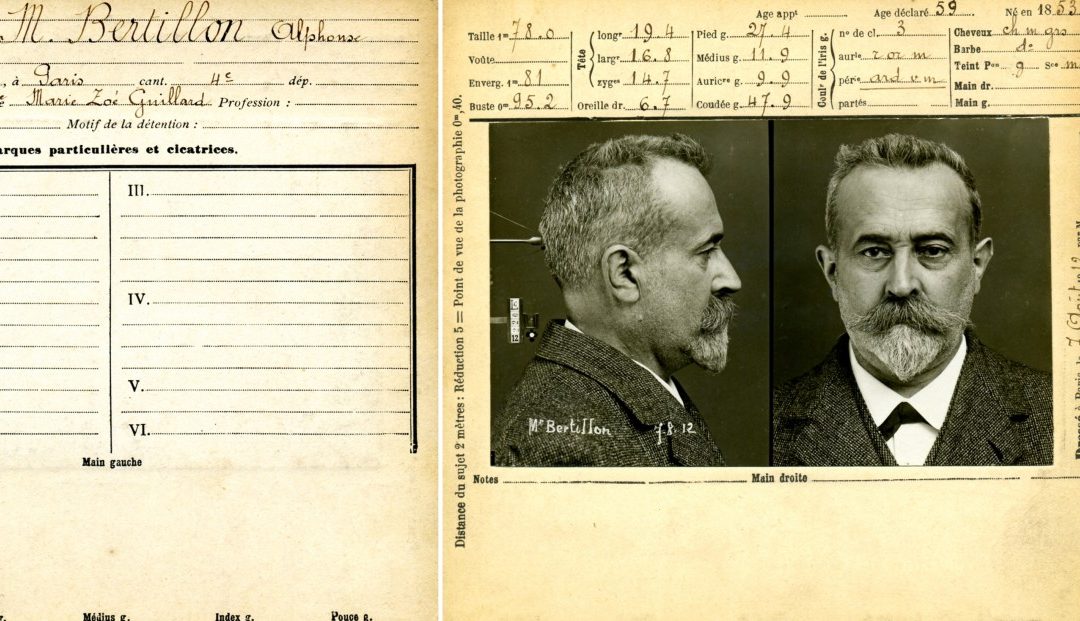

Biometrics devices are everywhere these days. Fingerprint readers, iris scanners, vein readers, and voice biometrics just to name a few. From door entry and access control, to time punching, to phone access, science fiction is now reality–and has been for many years. As any fan of investigative crime drama or sci-fi cinema knows, we are obsessed with the ability of technology to identify a person by some characteristic unique to their physiology. The movie Minority Report portrays a remote IRIS scan. In an alternate universe, in the world of the movie Gattaca, people extract blood to provide an instant DNA test and determine social standing. From the way you walk, to the pressure on each foot as you stand, to the shape of your ear (yes, your ear), we are bombarded with an incredible set of unique human characteristics by which we can all be identified. As you can see, there are so many choices for engineers inventing new and existing authentication protocols! However, there is one biometric modality that is increasingly becoming dominant for a variety of simple reasons. It is the oldest biometric system on the planet, your face. To understand where biometrics are going, you must understand where biometrics came from and a bit about human psychology. In 1870, a French anthropologist Alphonse Bertillion created what most consider the first biometric system used in identifying criminals. I guess it’s fitting that the first biometric system was tied to criminology from the beginning, given its pop-culture status among criminal narratives today. Using bodily measurements and photos as a start, and ultimately graduating to photos plus fingerprints, Bertillions biometric techniques laid the foundation for criminal investigation and identification for generations, including many techniques still in use today. In case you’re wondering, he was also the inventor of the mug shot. But to understand the origins biometrics, you must go back even further. It is estimated that 31,000 years ago, cave painters signed their depictions with fingerprints. Babylonians in 500BC used their fingerprints on clay tablets to create business transaction records. Yet this still doesn’t trace us back to the roots of biometrics as used to identify one person from another. To understand the history of biometrics, we must understand that biometric identification is a biological ability possessed by all humans. This ability is imprinted into our very DNA. The first biometric wasn’t fingerprints, or vein readers, or ears, or even voice.

Biometrics devices are everywhere these days. Fingerprint readers, iris scanners, vein readers, and voice biometrics just to name a few. From door entry and access control, to time punching, to phone access, science fiction is now reality–and has been for many years. As any fan of investigative crime drama or sci-fi cinema knows, we are obsessed with the ability of technology to identify a person by some characteristic unique to their physiology. The movie Minority Report portrays a remote IRIS scan. In an alternate universe, in the world of the movie Gattaca, people extract blood to provide an instant DNA test and determine social standing. From the way you walk, to the pressure on each foot as you stand, to the shape of your ear (yes, your ear), we are bombarded with an incredible set of unique human characteristics by which we can all be identified. As you can see, there are so many choices for engineers inventing new and existing authentication protocols! However, there is one biometric modality that is increasingly becoming dominant for a variety of simple reasons. It is the oldest biometric system on the planet, your face. To understand where biometrics are going, you must understand where biometrics came from and a bit about human psychology. In 1870, a French anthropologist Alphonse Bertillion created what most consider the first biometric system used in identifying criminals. I guess it’s fitting that the first biometric system was tied to criminology from the beginning, given its pop-culture status among criminal narratives today. Using bodily measurements and photos as a start, and ultimately graduating to photos plus fingerprints, Bertillions biometric techniques laid the foundation for criminal investigation and identification for generations, including many techniques still in use today. In case you’re wondering, he was also the inventor of the mug shot. But to understand the origins biometrics, you must go back even further. It is estimated that 31,000 years ago, cave painters signed their depictions with fingerprints. Babylonians in 500BC used their fingerprints on clay tablets to create business transaction records. Yet this still doesn’t trace us back to the roots of biometrics as used to identify one person from another. To understand the history of biometrics, we must understand that biometric identification is a biological ability possessed by all humans. This ability is imprinted into our very DNA. The first biometric wasn’t fingerprints, or vein readers, or ears, or even voice.

It was facial recognition.

Reason # 1: Human Readability

Almost immediately after being born, all humans have the incredible ability to recognize and distinguish faces. This ability quickly grows in breadth and depth over time. Our brains are simply are hard-wired to find the shape of a face. Ever wonder why you see faces in everyday objects? Clouds, the drain in your bath tub, the front of a car? It is because the human brain is built to not only recognize faces, but identify family, friends, and enemies faces distinct from each other. It was, is, and will always be incredibly important to our wellbeing–albeit it is much more about our social wellbeing than physical wellbeing in modern times. Early human survival depended on our ability to organize in groups and identify members of our group. Compared to other visual stimuli, the human brain is better at distinguishing and identifying a unique face vs other visual patterns like rocks, flowers, or shapes of mountains. In short, facial recognition is biological. It is human. Fingerprint, vein recognition, ear, and even voice recognition is not. This is an incredibly important point, and in the end one of the MOST important reasons that facial recognition will win out: All humans are incredibly adept at identifying other humans by their face. Without any special training. From the poorest educated to the most educated, nearly all of us posses this ability. Not the ear, iris, voice, or fingerprint, but their face. More on that later. But aren’t computers supposed to do all of this for us? If so, why does it matter? Before a discussion on human vs automated biometrics, let’s discuss a couple of technical terms for a background. False positives vs false negatives. In order to really understand biometrics, it must be clear that every type of biometric modality have pros and cons. Many of these pros and cons are talked about using two technical terms, false negatives and false positives. (I apologize in advance for the technical discussion, but it is essential to understand why this makes facial recognition superior)

False Positive

A false positive is in many ways the most intuitive of these two terms. Simply put, can a system successfully verify the identity of a face without making a mistake? A mistake is a false positive. In other words, the next time you fly on an airplane, the TSA will look at your ID, then look at your face. A false positive would allow the wrong person to pass as the right person. A false positive rate then determines the ratio of true identifications vs a false identification. If you had a false positive rate of .01%, then 999 out of 1000 times, you would be able to stop the “bad guy” from getting on the plane.

False Negative

A false negative is the opposite problem. I have a good friend who lost a significant amount of weight in the past few years. We flew out of a small airport together and the TSA looked at the ID, looked at him, and required a second ID. He could not identify that it was in fact the face of my friend by comparing the two photos. This is a false negative. It is falsely rejecting the identify of a person, when in fact it was the right person. So, a false negative is the inability to correctly identify the person when it is, in fact, the right person. So, if my false negative rate is 1%, then 99 out of 100 times, I’ll let the “good guys” onto the plane, but the 1 person who lost a lot of weight, I may need to step aside and prove he is who he says he is. Make sense? Most biometric systems have incredible false positive rates. In this regard, biometrics are like the strict TSA agent questioning everyone and everything. Imagine a TSA agent who is slow to allow you past his post, and quick to question your identity. This TSA agent has a stellar record, never allowing the wrong person to pass. Iris, fingerprint, palm print, all have outstanding false positive rates. IRIS, in fact, according to some sources will have millions of cross comparisons without a false positive. In fact, facial recognition also boasts very good false positive rates. So, although there are certainly some biometric modalities with superior false positive rates, all present a competent and suitable rate for nearly any task requiring authentication and identification. That takes us to the “false negative” rate, the innocent human trying to catch a flight with the pesky TSA agent that “just can’t quite tell” if that is you in the photo, and prevents you from getting through security. You see, the dirty secret in biometrics is that many modalities actually have a poor false positive rate. Imagine a fingerprint reader, for example. Did you know that approximately 5% of fingerprints cannot be ready by a fingerprint reader? That is 1 in 20 humans do not have suitable fingerprints to be scanned. If you work in construction, that rate will be even higher. It is the false negatives among common biometrics that cause an incredible dilemma, as I will describe. To be fair, facial recognition also suffer from a false negative rate that, depending on circumstances, can be > 1%. However, unlike EVERY OTHER modality, facial recognition has a redeeming quality. As has already been established, humans are uniquely qualified to look at a face, compare to another face, and determine if the two faces match. In fact, until a few months ago, humans were better than any computational system on the planet. 97.25% of the time, any untrained human can look at a photo, compare to another photo, and successfully determine if the two people are the same person. Because of this incredible human super-ability, facial recognition is the only modality that can easily be audited–by an untrained human. This allows for false negatives that are reviewed by fellow humans under the right circumstances as “exceptions”. Time collection, for example, is a workflow that allows for this type of process. Because we are all people, our psychology tells us that we are far more comfortable with a system that can be audited and checked by people post-fact, and facial recognition fits that bill.

Reason # 2: Ubiquity of visible light cameras

The visible light camera is arguably the most ubiquitous sensor in the world by which we can process a biometric signature from a human. Cameras exist on street corners, in banks, built into ATMs, laptops, and nearly every phone, including almost all so-called “dumb” phones. In short, the hardware is everywhere. No special camera to find the iris from a distance, no subdermal vein recognition sensor tied to a punch clock, and no pressure pad to measure how you stand. A few smartphones with fingerprint readers aside, there is no other modality that can support a BYOD (Bring-Your-Own-Device) approach to biometrics that satisfies the hourly worker on a construction site, all the way to the CEO of a company. In addition, if the past 30 years have taught us anything, it is that consumer hardware almost always wins. Based on this pattern, specialty hardware conducting biometrics days are numbered. Technology trends have a way of following the path of least resistance. The path of least resistance is clearly the visible light camera.

Reason # 3: Perfect equilibrium between convenience and security

Remember that time your IT administrator made you change your password every 2 months, and must conform to a custom set of rules with 3 numbers, upper case letters, special characters, doesn’t contain any words, and didn’t repeat? Wow, that password was super secure, right? Wrong. What did you immediately do? You put your password either on a sticky note on your monitor, or a nearly equally insecure “virtual sticky note” in your unencrypted email or text document on your hard drive. One thing is certain. You can have the highest security known to humankind, but if humans are intended to use it, and it is too inconvenient, it is HUMANS that will cause this system to be insecure if adequate convenience in using the system is an afterthought. I’d take this one step further. Convenience always wins where humans are concerned. Too inconvenient, humans will either find a way to kill the system, or work around it and thwart any illusion of security. In the end, it is ease of adoption and use that will make or break any system, but especially a biometric system. Facial recognition is both adequately secure for most purposes, and the most convenient of any biometric modality based both on ease of use, and the ubiquity of sensors as previously discussed. Add in that the modality is human audible to remove false-negatives, and it becomes incredibly convenient to use. There isn’t a close 2nd place. And there is only upside when talking about convenience and facial recognition. Facial recognition, for the most part, is a passive technology. Although efforts are being made to make iris passive, nothing matches the passive abilities of facial recognition. Although we use smart phones, time clocks, and wall mount-panels today for biometrics, more and more will transition to cameras that are already installed, already recording and collecting data. More of this work will become passive, making it even more convenient for a person to use. Imagine a day where walking into the factory punches me in to work, and leaving the factory punches me out. No time clocks. No smart phones. No scanning. Oh, and this will all be possible with the existing security cameras that are already installed. Guaranteed employees won’t find a more convenient way to punch in/out of work what was just described, all without sacrificing security. Imagine a day where door entry uses your own cell phone to open the door, rather than a cumbersome biometric system built-in. Science Fiction? This is all right around the corner. What about security? What about the perceived advantages to using vein or iris scanners? I’ll leave you with this final thought: think of the most secure place you have ever been. Think back to the method the guard used to let you pass (because, most secure places still do have a human who authenticates you). Be it TSA or the bank teller, they are using the oldest biometrics on the planet: facial recognition. The hybrid automation with human audit capability is just the next evolution of this very old process.